In the last posts we saw how to build microservices. Now it’s time to deploy them. As you expect there are some strategies here as well.

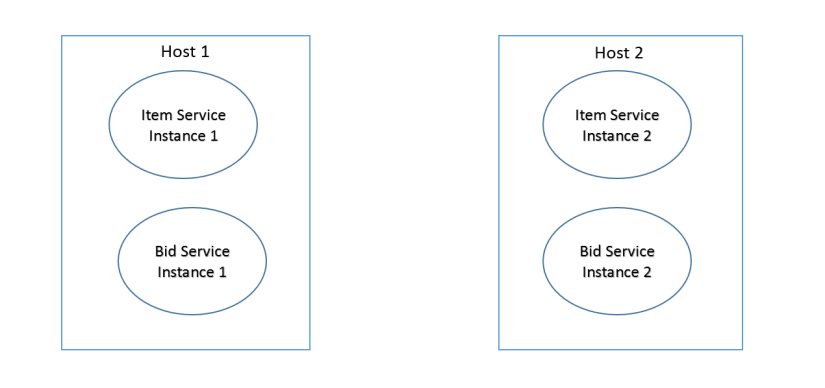

Multiple Service Instances per Host Pattern

It can be a physical host or a virtual one. The services may live in their own JVM process, or they can share it. They can be bundles in an OSGI container.

The deployments are fast in this case, just copy the packaged applications in the application server and start it. It’s easy as that. Despite it’s simplicity it can be hard to monitor resource utilization by each service, and even more difficult to limit the resources each service it’s using. Starting each service in it’s own process improves things, but not much. A bad thing would be to start all the services in the same JVM process, it’s too error prone. The services are coupled to the host, if the host go down all the containing services will cease to work.

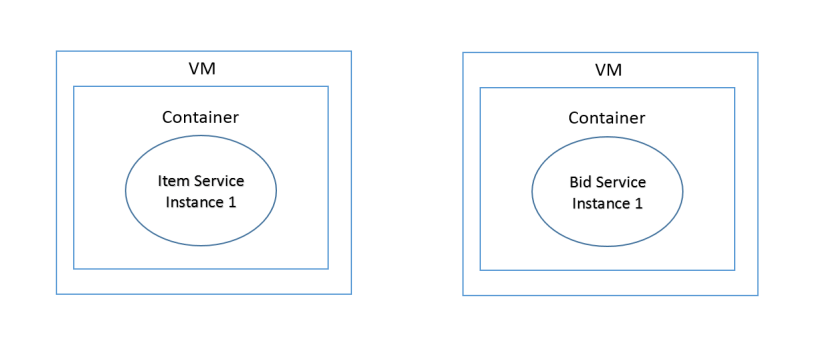

Service Instance per Host

Each service instance will have it’s own host. The host can be a container or a virtual machine. This ensures that services are running in isolation. Less error prone but difficult to maintain.

Containers

They are lightweight. Relatively easy to start. Fast. They should be setup with every dependency the service needs, including operation system. The services must be packaged as container images. Multiple containers can exists on the same host. Docker is a good solution here. Docker images can be monitored by a manager like Kubernetes.

Virtual Machines

More mature solution that containers. Resources can be assigned individually to each VM. AWS are dominating this market at the time being (EC2). The VMs are more difficult to build and takes longer to start them.

Serverless

New kid on the block. The most notorious serverless deployment environment is AWS lambda. Just zip your microservice, add some metadata about the requests it must handle, send it to Lambda and grab a coffee. Run code, not server they tell us. It sounds super appealing. The downside: besides the money, each request must complete within 300 seconds. It sounds reasonable, but again depends on what the request must do. For example a request for generating a huge report might take more than that; or consuming messages from a queue won’t work since it’s a long running process. Lambda functions (Faas) are stateless services, that means your services must be stateless.

So there you go. Choose what’s the best for your situation.